Announced January 29, 2015, SQL Azure now (natively) supports row-level security. I'm not going to explain how to implement this, the link I just provided does an excellent job at that. I think it is a really cool feature because it supports a lot of scenario's where one user/department/tenant/etc isn't allowed to see data from another user/department/tenant/etc. It prevents you from having to write queries throughout your codebase that end with: where UserId = 'johndoe' or where DepartmentId = 42. There's always a risk you forget to do this somewhere, causing data-leakage. Instead, you provide a context to run your queries in and let a security policy decide what data to return to the client.

But, you might ask, the problem of appending a where-clause to each query is now replaced by the problem of specifying a context before each query. What have I actually gained? Well, when using Entity Framework, it is relatively easy to intercept all queries when they're sent to the database and modify them. We're going to use this technique to make sure context is provided before each query. And actually there is another way to accomplish the same that may even be better, I haven't tested this thoroughly yet. I'll describe this technique at the end.

Set the stage

But first we need to set the stage. I'll describe all the steps here so that you can repeat them on your own SQL Azure database. First, we create a simple table that has the data we wish to secure with a security policy.

create table SecretTenantInfo ( Id int primary key identity(1, 1), SecretInfo nvarchar(50) not null, Context nvarchar(8) not null )

The column of interest is Context where we store the context for a specific tenant. Info for all tenants is stored in the same table so it is important no tenant ever sees the SecretInfo of the other tenants.

Next, we create a stored procedure that allows us to easily set the CONTEXT_INFO for a particular tenant. To separate security concerns from business concerns, this stored procedure is created in another schema: security.

create schema [security] go create procedure [security].sp_setContextInfo @context [nvarchar](8) as begin declare @contextBinary varbinary(128) = convert(varbinary(128), @context, 0); set context_info @contextBinary; end go

Note that the information stored in CONTEXT_INFO has a maximum length of 128 bytes so in this example I cast the incoming nvarchar(8) to a varbinary(128). Next we need a table-valued function that takes a context as input and returns a row for each target table row that satisfies a certain condition.

create function [security].fn_contextSecurityPredicate(@context [nvarchar](8))

returns table

with schemabinding

as

return

select 1 as fn_contextSecurityPredicateResult

where convert(varbinary(128), @context, 0) = context_info()

Note that this predicate function is similar to a function you could use in a CROSS or OUTER APPLY statement. In fact, let's try and see what happens if we use this function in an OUTER APPLY. First we add two rows to our table:

insert into SecretTenantInfo (SecretInfo, Context)

values ('Tenant_0', 'Ctx_0'), ('Tenant_1', 'Ctx_1')

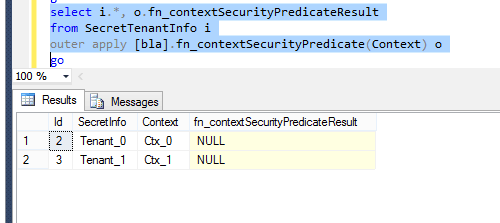

And then we run the following SQL statement:

select i.*, o.fn_contextSecurityPredicateResult from SecretTenantInfo i outer apply [security].fn_contextSecurityPredicate(Context) o

The result is as follows:

As you can see, NULL is returned as the predicate value for each row in the table since we haven't set a context yet. If we would change the OUTER APPLY to a CROSS APPLY, no data would be returned. So internally SQL Azure uses a CROSS APPLY (or something very similar) to enforce a security policy. Speaking of security policies, this is the last part of our database setup.

create security policy security.contextFilter

add filter predicate security.fn_contextSecurityPredicate(Context) on dbo.SecretTenantInfo

with (state = on)

Now that we have a security policy in place, no data is returned from any select statements on our SecretTenantInfo if we haven't provided the right context.

Entity Framework (solution 1)

Our first solution uses the IDbCommandInterceptor interface. This interface has three method pairs: NonQueryExecuting/NonQueryExecuted, ReaderExecuting/ReaderExecuted and ScalarExecuting/ScalarExecuted. We'd like to modify queries before they arrive at the database so we need the Executing methods. In the example below I user the ReaderExecuting method, the other two have a similar implementation.

public class RLSCommandInterceptor : IDbCommandInterceptor

{

public void ReaderExecuting(DbCommand command,

DbCommandInterceptionContext interceptionContext)

{

// Check if we are running the query on the right context.

if (interceptionContext.DbContexts.Count() == 1 &&

interceptionContext.DbContexts.Single() is ConnectorContext)

{

var rowLevelSecurityContext = ...get context...;

command.CommandText =

"EXEC [security].[sp_setContextInfo] '" + rowLevelSecurityContext + "';rn" +

command.CommandText;

}

}

......

}

First we check whether the query is actually run against the right Entity Framework context (which in my case is called ConnectorContext). This check isn't absolutely necessary but if you enable migrations, Entity Framework runs a number of queries on startup that do not really need a context. Next we need to acquire our current context. It depends on your application what that is. It may be a user id obtained from the current thread or a tenant id obtained from the environment. And finally we modify the SQL that gets sent to SQL Azure. All queries now automatically have the right context set.

Configuration of a command interceptor can be done in code or in a configuration file and is described here.

Entity Framework (solution 2)

As I promised, there is another solution for the same problem. A disadvantage of the described approach is that a context is set for every request which isn't necessary. Context info is session- or connection-scoped so we need to set it just once per session or connection. PLEASE NOTE that I haven't thoroughly tested the next part yet. If I encounter any problems I'll update this post.

When opening an Entity Framework DbContext, there is an opportunity to run SQL statements as illustrated in the following code.

public class ConnectorContext : DbContext

{

public ConnectorContext() : base("ConnectorContext")

{

// Open connection to database to enable setting context info.

var conn = Database.Connection;

conn.Open();

var rowLevelSecurityContext = ...get context...;

Database.ExecuteSqlCommand(

"EXEC [security].[sp_setContextInfo] {0}", rowLevelSecurityContext);

}

......

}

So now, instead of providing context for every query, we provide it once on the connection when opening the database context. As I said I haven't really begun testing this but I think this is a problem-free way to make use of SQL Azure row-level security without bothering the rest of your codebase with this concern.

UPDATE

As I said already, solution 2 hadn't been very well tested yet. Well, it turns out there are two problems. First of all, in the DbContext constructor I call a stored procedure. This procedure is created by one of my migrations. If you start with an empty database, this migration has not yet been applied so the stored procedure does not yet exist (a classic chicken-and-egg problem). Second of all, opening a database connection in the constructor of a DbContext breaks Entity Framework migrations. It took me a while to figure this out because the error messages point in an entirely different direction. When you attempt an Add-Migration, you get the following error:

Unable to generate an explicit migration because the following explicit migrations are pending: [201507021605136_InitialCreate, 201507030629460_SamlTokenXmlRequired, ..., 201507081458529_CreateSecurityPolicy]. Apply the pending explicit migrations before attempting to generate a new explicit migration.

That's weird because I'm pretty sure all these migrations are already applied. My next step was to try an Update-Database. This gave me the following message:

System.InvalidOperationException: This operation requires a connection to the 'master' database. Unable to create a connection to the 'master' database because the original database connection has been opened and credentials have been removed from the connection string. Supply an unopened connection. ---> System.Data.SqlClient.SqlException: Login failed for user ''.

Actually this gives a hint in the right direction but when pasting the error message into Google you are pointed in an entirely different direction. Finally I tried providing an explicit target migration to Update-Database but that resulted in yet another error:

There is already an object named 'SsoInfo' in the database.

In my InitialCreate I happen to create a table named SsoInfo so Update-Database just attempts to start at the first migration and continue from there. I'm still in a situation where I can just drop my database and start over so I tried that as well but the above errors kept appearing.

After backtracking my changes I figured out that opening a connection in the DbContext constructor is the problem. So what we actually want is to 'know' in the constructor whether we are using the context at runtime or at design-time (when adding migrations, for example). It turns out this is possible by adding an implementation of the IDbContextFactory<TContext> interface to the same assembly that has your DbContext implementation. Mine is as follows:

public class MigrationDbContextFactory : IDbContextFactory

{

public ConnectorContext Create()

{

return new ConnectorContext(isMigrationsMode: true);

}

}

The ConnectorContext constructor has changed as well:

public ConnectorContext(bool isMigrationsMode = false) : base("ConnectorContext")

{

if (!isMigrationsMode)

{

// Open connection to database to enable setting context info.

var conn = Database.Connection;

conn.Open();

var rowLevelSecurityContext = ...get context...;

Database.ExecuteSqlCommand(

"EXEC [security].[sp_setContextInfo] {0}", rowLevelSecurityContext);

}

}

By the way, note that this solves both our problems: the call to sp_setContextInfo is never made when updating our database and no connection is opened in design-time mode.